The open source NGINX software is very popular as a frontend server for many hosting providers, CDNs, and other high‑volume platform providers. Caching, reverse proxying, and serving static files are among the most widely used features. However, there is a particular use case when it can be complicated to use a build of the open source NGINX software – large‑volume hosting.

In this blog post, we’ll explain how you can use NGINX Plus features to make large‑volume hosting easier and more reliable, freeing up engineering and operations resources for other critical tasks.

Imagine a hosting company using a reverse proxy frontend to handle a large number of hostnames for its customers. Since every day new customers appear and current customers leave, the NGINX configuration needs to be updated quite frequently. In addition, the use of containers and cloud infrastructure requires DevOps to move resources constantly between different physical and virtual hosts.

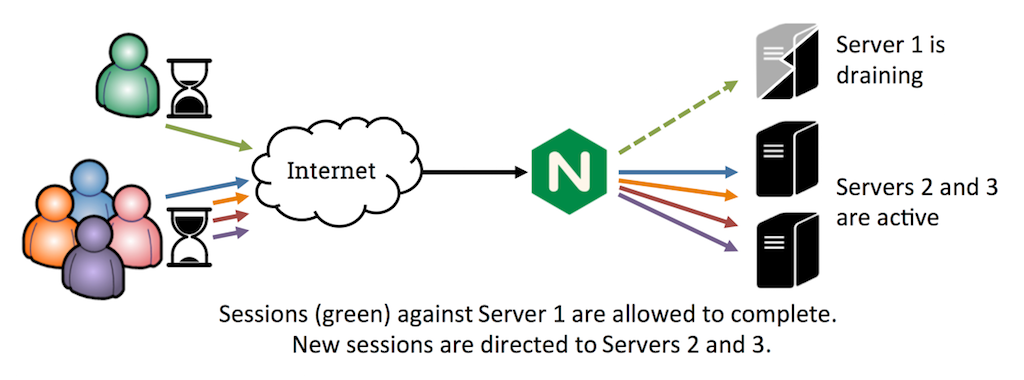

With open source NGINX, reconfiguring a group of upstream (backend) servers requires you to reload the configuration. Reloading the configuration causes zero downtime, but doing it while NGINX is under high load can cause problems. One reason is that all worker processes have to finish serving established connections before being terminated, a process known as draining. The delay can be lengthy if there are:

- Large file downloads in progress

- Clients using the WebSocket protocol

- Clients with slow connectivity (3G, mobile)

- Slowloris attacks

Reloading multiple times without giving NGINX enough time to terminate the worker processes can lead to increased memory use and eventually can overload the system.

A large number of worker processes in shutting down state can indicate this problem:

[user@frontend ]$ ps afx | grep nginx

652 ? Ss 0:00 nginx: master process /usr/sbin/nginx -c /etc/nginx/nginx.conf

1300 ? S 0:00 \_ nginx: worker process is shutting down

1303 ? S 0:00 \_ nginx: worker process is shutting down

1304 ? S 0:00 \_ nginx: worker process is shutting down

1323 ? S 0:00 \_ nginx: worker process is shutting down

1324 ? S 0:00 \_ nginx: worker process is shutting down

1325 ? S 0:00 \_ nginx: worker process is shutting down

1326 ? S 0:00 \_ nginx: worker process is shutting down

1327 ? S 0:00 \_ nginx: worker process is shutting down

1329 ? S 0:00 \_ nginx: worker process is shutting down

1330 ? S 0:00 \_ nginx: worker process is shutting down

1334 ? S 0:00 \_ nginx: worker process

1335 ? S 0:00 \_ nginx: worker process

1336 ? S 0:00 \_ nginx: worker process

1337 ? S 0:00 \_ nginx: worker processCheck out this configuration file for more technical details: https://www.nginx.com/resource/conf/slow-reload.conf.

Solution: On-the-Fly Reconfiguration

To prevent these problems, we developed on‑the‑fly reconfiguration, which enables you to use an API to change the backend server configuration. This feature is available in our commercial offering, NGINX Plus.

Another NGINX Plus feature that can be used together with on‑the‑fly reconfiguration is session route persistence, in which you to specify the particular server used for a given request. A server route can be created based on any variable found in the request. Commonly used variables include cookie‑related variables like $cookie_jsessionid and IP‑address variables like $remote_addr. In the following example we use the $http_host variable, which corresponds to the Host header.

This fully commented example combines the on‑the‑fly reconfiguration and session route persistence features.

http {

upstream vhosts {

zone vhosts 128k; # Shared memory zone required for all dynamic upstreams.

sticky route $http_host; # Route session persistence based on the 'Host' header.

# We will define backend servers with the on-the-fly reconfiguration API, so

# no servers are listed here.

}

server {

listen 80; # Production frontend listens on this port

status_zone vhosts; # Status zone used for monitoring with the "Status" module

location / {

proxy_pass http://vhosts;

proxy_set_header Host $http_host;

}

}

server {

listen 8888; # This server block is used for upstream management;

# we use a different port that can be secured by the firewall.

# Another method is listening on a different IP address.

allow 10.0.0.0/24; # Additional security layer for this management server

allow 127.0.0.1/32;

deny all;

root /usr/share/nginx/html; # Location of NGINX Plus static files

location /upstream_conf {

upstream_conf; # Endpoint for the on-the-fly reconfiguration API

}

location = /status.html { } # HTML page for NGINX Plus dashboard

location /status {

status; # Endpont for the live activity monitoring API

}

}

}The complete configuration is available for download at https://www.nginx.com/resource/conf/upstream-host-route.conf.

On-the-Fly Reconfiguration with the upstream_conf API

Notice that the upstream block in this configuration does not include any server directives to define the hostnames of backend servers. We are going to define them dynamically, using the upstream_conf API. An easy way to access the API is with curl commands.

Let’s say that we need to add the following customers into our configuration:

| Customer hostname | Backend server IP address and port |

|---|---|

| www.company1.com | 127.0.0.1:8080 |

| www.company2.com | 10.0.0.10:8000 |

| www.homepage1.com | 10.0.0.10:8001 |

To add the customers, we run the following commands that access the upstream_conf API. We’re logged into the shell on the NGINX Plus host, but it’s possible to run these commands from any secure location:

[user@frontend ]$ curl 'http://127.0.0.1:8888/upstream_conf?add=&upstream=vhosts&server=127.0.0.1:8080&route=www.company1.com'

server 127.0.0.1:8080 route=www.company1.com; # id=0

[user@frontend ]$ curl 'http://127.0.0.1:8888/upstream_conf?add=&upstream=vhosts&server=10.0.0.10:8000&route=www.company2.com'

server 10.0.0.10:8000 route=www.company2.com; # id=1

[user@frontend ]$ curl 'http://127.0.0.1:8888/upstream_conf?add=&upstream=vhosts&server=10.0.0.10:8001&route=www.homepage1.com'

server 10.0.0.10:8001 route=www.homepage1.com; # id=2To check the current state of the upstream servers, run the list command:

[user@frontend ]$ curl 'http://127.0.0.1:8888/upstream_conf?list=&upstream=vhosts'

server 127.0.0.1:8080 route=www.company1.com; # id=0

server 10.0.0.10:8000 route=www.company2.com; # id=1

server 10.0.0.10:8000 route=www.homepage1.com; # id=2

The output from the list command is formatted as NGINX Plus directives, which you can copy directly into the configuration file. For example, you can include this last known good configuration in the upstream block for smooth recovery when a reload is necessary.

To remove a server from an upstream, identify it by its ID (as reported by the list command):

[user@frontend ]$ curl 'http://127.0.0.1:8888/upstream_conf?remove=&upstream=vhosts&id=2'

server 127.0.0.1:8080 route=www.company1.com; # id=0

server 10.0.0.10:8000 route=www.company2.com; # id=1By default, changes you make with the upstream_conf API do not persist across NGINX Plus restarts or configuration reloads. In NGINX Plus Release 8 and later, you can include the state directive in the upstream block to make changes persistent. The directive names the file in which NGINX Plus stores state information for the servers in the upstream group. When it is included in the configuration, you cannot statically define servers using the server directive.

On-the-Fly Reconfiguration with the Live Activity Monitoring Dashboard

Another way to see your current server configuration and perform changes on the fly is with the NGINX Plus live activity monitoring dashboard. The configuration snippet above includes all the necessary directives for configuring live activity monitoring. After adding the directives to your configuration, you can access the dashboard by opening http://your-nginx-server:8888/status.html in a web browser on the NGINX Plus host.

Alternatively, you can access the JSON-formatted output from the live activity monitoring API at http://your-nginx-server:8888/status.

For a live demo of a working dashboard, check out http://demo.nginx.com/.

We have created a sample configuration that enables you to add and remove the entire set of backend servers in an upstream group using just the NGINX Plus upstream_conf API. Now you can perform backend and frontend changes as frequently as needed and reload NGINX Plus only when a significant change is required.

You can download the full NGINX Plus configuration file (which includes the snippet shown above) at https://www.nginx.com/resource/conf/upstream-host-route.conf.

Summary

As you see, with an incorrect NGINX or NGINX Plus configuration, adding and removing hostnames and corresponding backend services can lead to unnecessary increases in memory use, and resulting performance problems, in a highly loaded deployment. Upgrading your stack to NGINX Plus resolves this challenge with the help of on‑the‑fly reconfiguration APIs and our session persistence feature.

Use of these advanced features of NGINX Plus reduces the number of required process reloads, improves responsiveness to customer requests, provides better infrastructure scalability, and enables technical teams to spend their time on other, more critical tasks.

To try out the NGINX Plus features we’ve discussed, start your free 30-day trial today or contact us for a live demo.