The world is going digital at breakneck speed. Technology is front and center in our day‑to‑day lives. From entertainment to banking to how we communicate with our friends, technology has changed how we interact with the world. Businesses of all sizes and in all industries are rolling out compelling digital capabilities to attract, retain, and enrich customers. Every company is now a technology company.

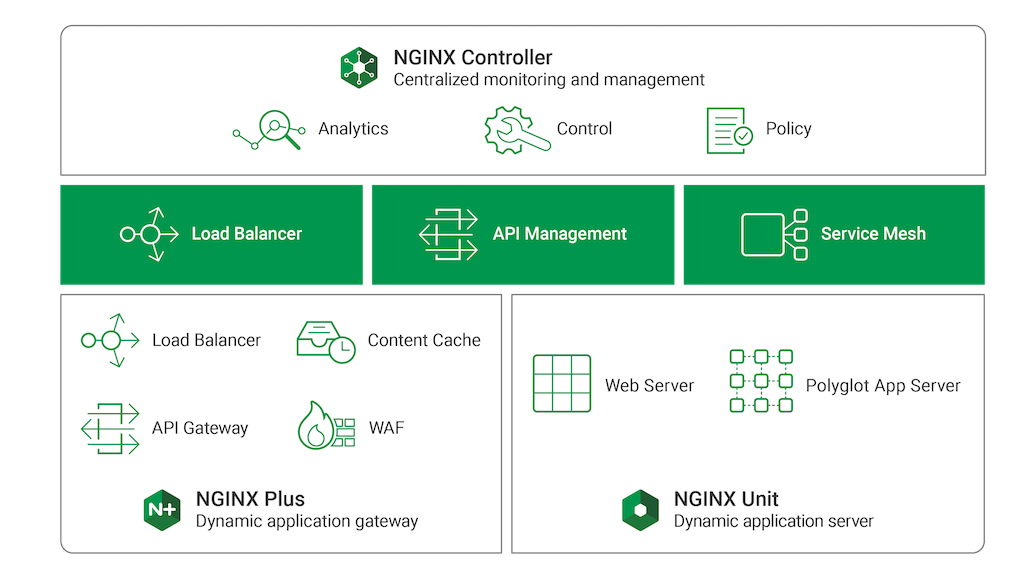

Companies on this journey need to master digital transformation and many turn to NGINX to create a modern application stack. More than 330 million websites rely on our open source software. Our technology helps companies remove friction from digital delivery, optimize digital supply chains, and roll out digital services faster. The NGINX Application Platform is a consolidated set of tools that improves application performance, automates application delivery, and decreases capital and operational costs.

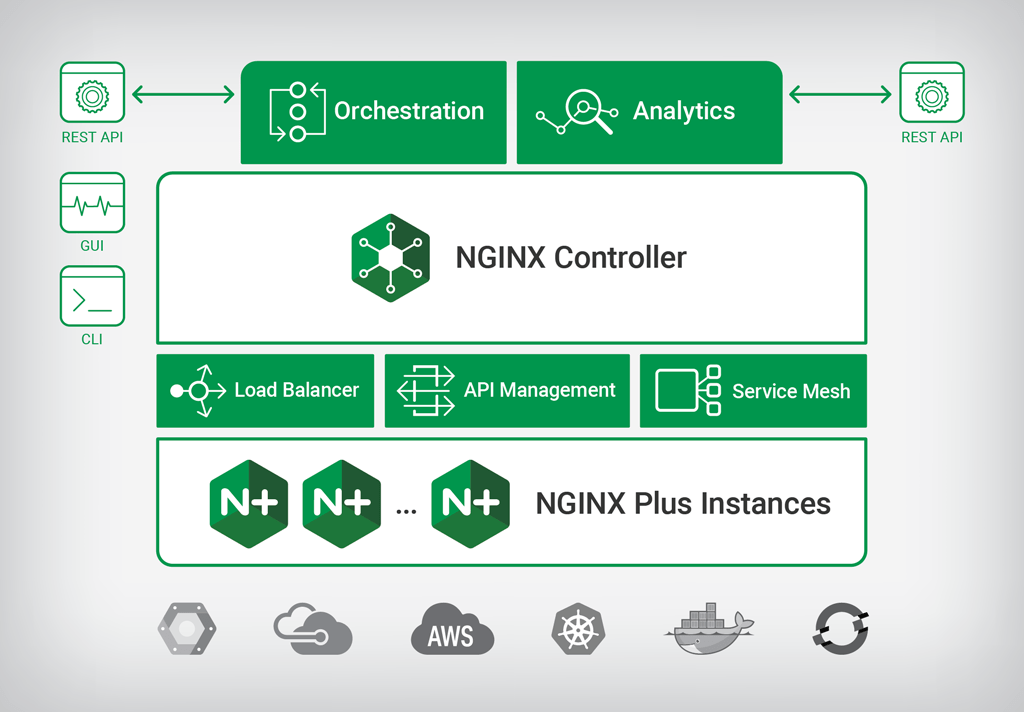

Today we are releasing the most substantial set of updates to the NGINX Application Platform since it was originally announced. These updates leverage the modular architecture of NGINX Controller to enable enterprises to provide the right workflows to the right teams managing NGINX Plus in the data plane. These capabilities extend NGINX’s ability to further simplify application infrastructures by consolidating application delivery, API management, and service mesh management into a single solution.

Here’s a quick summary of all three product releases (see below for more details):

- NGINX Controller 2.0 enhances modular architecture – You can now manage APIs with a plug‑in module to NGINX Controller. The new API Management Module defines an API endpoint and policies for it through an intuitive UI. NGINX Controller 2.0 also updates the existing Load Balancer Module to improve configuration management. Finally, we are announcing development of a service mesh solution. The Service Mesh Module for Controller will simplify how organizations move from common Ingress patterns for containers to more complex service mesh architectures designed to optimize management of dozens, hundreds, or thousands of microservices.

- NGINX Plus R16 debuts new dynamic clustering – The newest release of NGINX Plus includes the ability to share state and key‑value stores across a distributed cluster of NGINX Plus instances. This provides unique, dynamic capabilities like global rate limiting for API gateway and DDoS mitigation. NGINX Plus R16 also introduces new load‑balancing algorithms for Kubernetes Ingress control and microservices use cases; enhanced UDP support for environments like OpenVPN, voice over IP (VoIP), and virtual desktop infrastructure (VDI); and a new integration with AWS PrivateLink to help organizations accelerate hybrid cloud deployments.

- NGINX Unit 1.4 improves security and language support – NGINX Unit is an open source web and application server, made generally available in April 2018. NGINX Unit 1.4 adds TLS capabilities to provide “encryption everywhere”. NGINX Unit supports dynamic reconfiguration via API, which means zero‑downtime config changes with seamless certificate updates. When a new certificate is ready, an API call is all it takes to activate it, without killing or restarting application processes. NGINX Unit 1.4 has also added experimental support for JavaScript with Node.js, extending the current language support for Go, Perl, PHP, Python, and Ruby. Full support for JavaScript and Java are coming.

To learn more about the latest changes to the NGINX Application Platform, watch our keynote from NGINX Conf 2018 live or on demand.

Updates in Detail

NGINX Controller 2.0

NGINX Controller provides centralized monitoring and management for NGINX Plus. NGINX Controller 2.0 introduces a new modular architecture, similar to NGINX itself. With this new modular architecture in place, our plan is to create new modules that enhance the core NGINX Controller functionality. The load‑balancing functionality introduced in NGINX Controller 1.0 is itself now a module. The first new module that we are releasing in NGINX Controller 2.0 is the API Management Module, which will be available in Q4 of 2018. We are also announcing a new Service Mesh Module, slated to release in the first half of next year.

New API Management Module

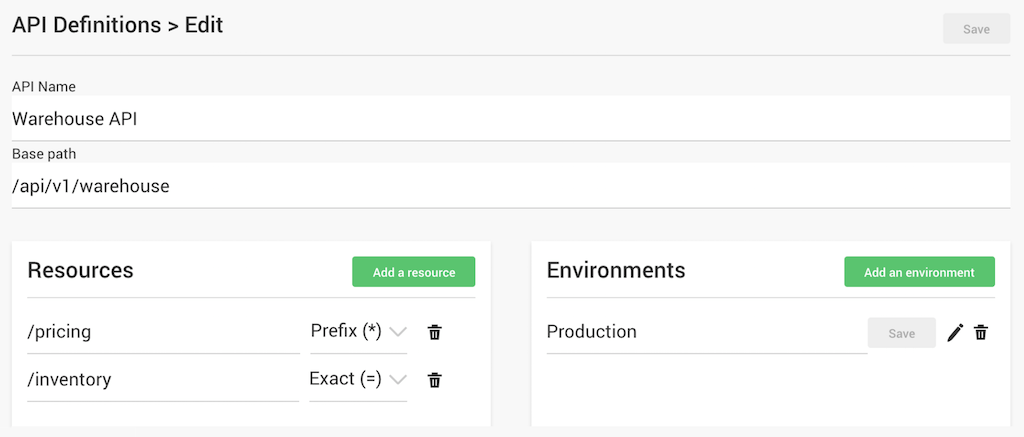

NGINX is releasing a new module for NGINX Controller that extends its capabilities to managing NGINX Plus as an API gateway. With this module, NGINX Controller provides API definition, monitoring, and gateway configuration management. This lighter‑weight, simpler solution provides a better alternative for managing enterprise API traffic compared to traditional API management solutions like Apigee and Axway.

With the NGINX Controller API Management Module, APIs are first‑class citizens. You define the Base path for your API, the services underlying it (/pricing and /inventory in the example above), and the servers backing those services. You can also define policies such as authentication and rate limiting per API.

For more detail on the NGINX Controller API Management Module and how it works with NGINX Plus, see our companion blog post.

Enhanced Load Balancer Module

NGINX Controller 1.0, launched in June 2018, enables you to manage and monitor large NGINX Plus clusters from a single location. In NGINX Controller 2.0, we are packaging this capability in the Load Balancer Module and adding more features. The new features include advanced configuration management – enabling a configuration‑first approach for NGINX Plus instances with version control, diffing, and reverting – and a ServiceNow webhook integration.

Upcoming Service Mesh Module

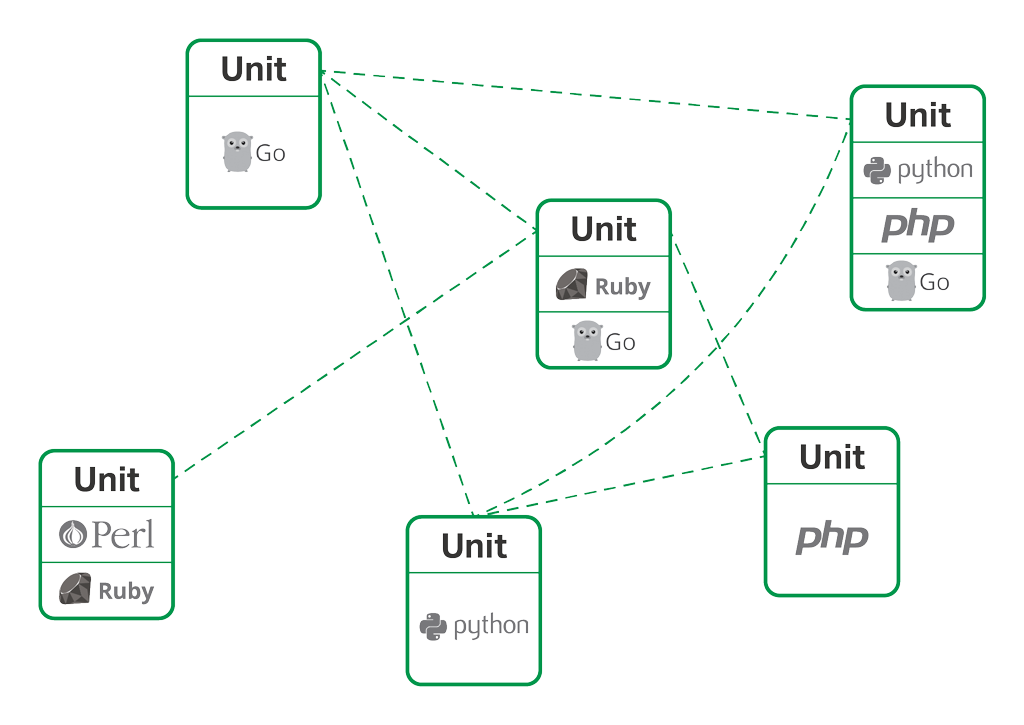

Service mesh architectures are emerging as a way to manage networking in a microservices environment. They seek to address the governance, security, and control issues that impact organizations when they deploy large numbers of microservices. The NGINX Controller Service Mesh Module builds on the Fabric Model of the NGINX Microservices Reference Architecture, launched two years ago. This new module enables NGINX Controller to manage and monitor service mesh deployments, apply traffic management policies, and simplify complex service‑to‑service workflows.

Introduction of the Service Mesh module is planned for the first half of 2019.

NGINX Plus R16

NGINX Plus is an all‑in‑one load balancer, content cache, web server, and API gateway. It has enterprise‑ready features on top of the NGINX Open Source software. NGINX Plus R16 includes new clustering features, enhanced UDP load balancing, and DDoS mitigation that make it a more complete replacement for costly F5 BIG‑IP hardware and other load‑balancing infrastructure.

New features in NGINX Plus R16 include:

- Cluster‑aware rate limiting – You can now specify global rate limits applied across an NGINX Plus cluster. Global rate limiting is an important feature for API gateways and is an extremely popular use case for NGINX Plus.

- Cluster‑aware key‑value store – The NGINX Plus key‑value store can now be synchronized across a cluster and includes a new

timeoutparameter. The key‑value store can now be used to provide dynamic DDoS mitigation, distribute authenticated session tokens, and build a distributed content cache (CDN). - Random with Two Choices load balancing algorithm – With this new algorithm, two backend servers are picked at random, and then the request is sent to the less loaded server of the two. Random with Two Choices is extremely efficient for clusters and will be the default in the next release of the NGINX Ingress Controller for Kubernetes.

- Enhanced UDP load balancing – NGINX Plus R16 can handle multiple UDP packets from the client, enabling us to support more complex UDP protocols such as OpenVPN, VoIP, and VDI.

- AWS PrivateLink support – PrivateLink is Amazon’s technology for creating secure VPN tunnels into a Virtual Private Cloud. With this release you can now authenticate, route, load balance, and optimize how traffic flows within a PrivateLink data center.

To learn more about NGINX Plus R16, read our announcement blog.

NGINX Unit 1.4

NGINX Unit is a dynamic web and application server created by Igor Sysoev, original author of NGINX Open Source. With NGINX Unit you can run applications written in Go, Perl, PHP, Python, and Ruby on the same server. It is fully dynamic with a REST API‑driven JSON configuration syntax. All configuration changes happen in memory, so there are no service restarts or reloads.

New in NGINX Unit 1.4 is support for SSL encryption with a certificate storage API that provides detailed information about your certificate chains, including common and alternative names as well as expiration dates.

Additionally we are releasing preliminary Node.JS support. We are also working on full Java support, WebSocket, flexible request routing, and serving of static content.

NGINX Unit is open source; try it today.

NGINX as a Dynamic App Development and Delivery Stack

With these new capabilities, NGINX is now the only vendor helping infrastructure teams build a dynamic ingress and egress tier that optimizes north‑south traffic, as well as a dynamic backend that helps app teams develop and scale east‑west traffic for both monolithic and microservices apps.

This dynamic application development and delivery stack can be deployed as a:

- Dynamic application gateway – The NGINX Application Platform is the industry’s only solution that combines reverse proxy, cache, load balancer, API gateway, and WAF functions into a single, dynamic application gateway for north‑south app and API traffic. New clustering and state‑sharing features in R16 combined with new load‑balancing and API‑management features in NGINX Controller provide a solution that adapts in real time to changing application, security, and performance needs. Traditional application delivery controllers (ADC) do not provide API gateway functionality and lack the flexibility to act as a single, distributed ingress and egress tier that frontends any app across any cloud.

- Dynamic application infrastructure – NGINX is the most popular web server for high‑performance sites and applications. But as organizations journey from monoliths to microservices, they need additional infrastructure. NGINX solves this by complementing its industry‑leading Kubernetes Ingress controller with the upcoming enhancements to NGINX Controller as a lightweight service mesh. Combining those capabilities with NGINX Unit’s ability to execute both monolithic and microservice application code, we now offer the industry’s only dynamic application infrastructure that provides both web and application servers with optimized east‑west traffic for companies at any stage of microservices maturity.

NGINX’s VP of Product Management, Sidney Rabsatt, covers all of these exciting announcements in detail during his keynote at NGINX Conf 2018. Tune in live at 9:45 AM ET today, October 9, or watch on demand any time by visiting the same URL after NGINX Conf.